In today’s digital world, data is like the new oil. Companies everywhere are racing to collect information to make better decisions. However, building the systems that move this data—called pipelines—is not easy. This article explores the top Data Engineering Challenges that professionals face right now. By understanding these hurdles, businesses can build stronger, faster, and more reliable systems. Whether you are a beginner or a manager, knowing these obstacles is the first step to solving them. Let’s dive into the complex world of modern data engineering.

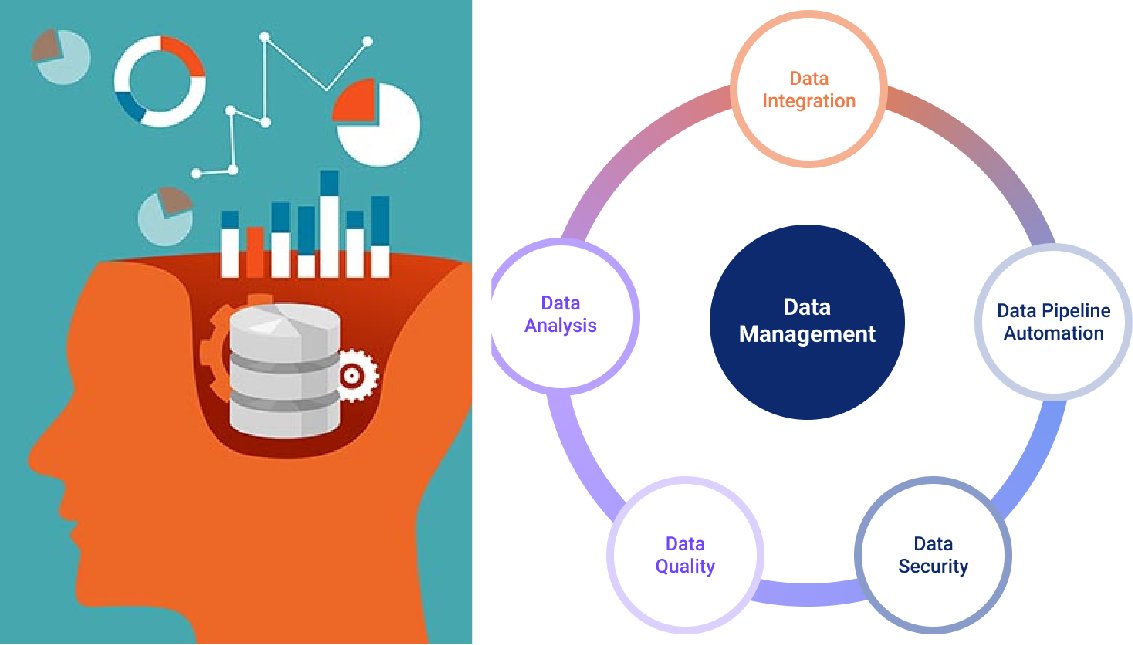

The Complexity of Data Integration

One of the biggest Data Engineering Challenges is bringing data together from many different places. Imagine trying to cook a meal, but your ingredients are in five different grocery stores. This is what data engineers face daily. They have data coming from websites, mobile apps, social media, and internal sales systems. Each source often speaks a different “language” or format.

This creates a messy situation. Engineers must write special code to translate all this information into a single format that the company can use. If one source changes its format, the whole pipeline might break. This constant need for maintenance is a major headache. Solving these Data Engineering Challenges requires robust tools that can handle many different types of connections automatically.

Ensuring High Data Quality

Perhaps nothing is more important than trust. If a business cannot trust its data, it cannot make good decisions. Ensuring data quality is one of the most persistent Data Engineering Challenges in the industry. Bad data includes missing values, duplicate records, or information that is just plain wrong. For example, a customer might be listed twice with different addresses.

Cleaning this data takes a lot of time and effort. It is not just about fixing errors once; it is about keeping the data clean forever. Engineers use automated testing to catch errors before they reach the final report. Without these checks, bad data spreads like a virus. Overcoming these Data Engineering Challenges means building strict quality gates at every step of the pipeline.

Scalability and Performance Issues

As companies grow, their data grows too. A system that works well for 1,000 customers might crash when you have 1,000,000 customers. Handling this growth is one of the classic Data Engineering Challenges. When data volume spikes—like during a Black Friday sale—pipelines can slow down or stop completely. This latency frustrates users who need real-time answers.

People Read Also: About Jro279waxil: A Practical, Expert-Level Deep Dive

Engineers must design systems that expand easily. This is often done using cloud technologies like AWS or Google Cloud. These platforms allow you to add more power when you need it. However, designing for scale is tricky and expensive. Balancing speed with cost is one of the critical Data Engineering Challenges that requires careful planning and smart architecture.

Security and Data Privacy

With great data comes great responsibility. Protecting sensitive information is one of the most serious Data Engineering Challenges today. Governments have passed strict laws like GDPR in Europe and CCPA in California. These laws say that companies must keep customer data safe and private. If a pipeline leaks data, the fines can be huge.

Engineers must build security into the pipeline from day one. This involves encrypting data so hackers cannot read it and controlling who has access to specific files. It adds a layer of complexity to every project. Navigating these legal and technical requirements is one of the Data Engineering Challenges that keeps data teams awake at night. Safety must always be a top priority.

Managing Real-Time Data Streams

In the past, companies looked at data once a day. Today, they want to see it the moment it happens. Processing real-time streaming data is one of the emerging Data Engineering Challenges. Think about a credit card company detecting fraud. They need to analyze the transaction in milliseconds, not hours.

Building streaming pipelines is much harder than building traditional batch pipelines. If the system goes down for even a minute, valuable data might be lost forever. Tools like Apache Kafka and Apache Flink are popular, but they are hard to learn. Mastering these technologies to solve real-time Data Engineering Challenges is a highly sought-after skill in the job market.

Handling Unstructured Data

Not all data fits neatly into rows and columns like an Excel sheet. Today, we have emails, videos, audio recordings, and images. Dealing with this “unstructured” data is one of the growing Data Engineering Challenges. Traditional databases struggle to store and search this kind of information effectively.

To solve this, engineers use “Data Lakes.” A data lake can store anything, but it can easily turn into a “Data Swamp” if not managed well. Organizing this messy data so it can be analyzed is difficult. Extracting meaning from a video file or a PDF requires advanced AI tools. This shift toward unstructured content presents unique Data Engineering Challenges for modern teams.

The shortage of Skilled Talent

Sometimes the problem isn’t the technology; it is the people. One of the most critical Data Engineering Challenges is simply finding enough qualified workers. Data engineering is a hard job. It requires knowing coding, database design, cloud systems, and security. Finding one person who knows all of this is very rare.

Companies often struggle to hire and keep good engineers. This leads to small teams being overworked. When teams are tired, they make mistakes, leading to more bugs in the pipeline. Addressing these Data Engineering Challenges involves investing in training and building a strong team culture. Education and mentorship are key to solving the talent gap.

Tool Selection and Integration Fatigue

The data world changes very fast. Every month, a new tool promises to solve all your problems. Deciding which tools to use is one of the strategic Data Engineering Challenges. Should you use open-source software that is free but hard to manage? Or should you pay for a managed service?

Choosing the wrong tool can be an expensive mistake. Furthermore, making all these different tools work together is a nightmare. This is often called “tool fatigue.” Engineers spend more time fixing the tools than working on the data. Simplifying the tech stack is one of the essential Data Engineering Challenges for maintaining a healthy and efficient work environment.

Data Governance and Documentation

Who owns the data? Who is allowed to change it? These questions relate to governance. Lack of clear governance is one of the silent Data Engineering Challenges. Without rules, pipelines become chaotic. Different teams might calculate “revenue” in different ways, leading to confusion in meetings.

Documentation is also a big issue. Engineers often forget to write down how the system works. When an engineer leaves the company, they take that knowledge with them. This leaves the new team blind. enforcing strict documentation and ownership rules helps mitigate these Data Engineering Challenges and keeps the pipeline understandable for everyone.

Cost Management in the Cloud

Cloud computing solved many problems, but it created a new one: cost. One of the financial Data Engineering Challenges is keeping the bills down. It is very easy to leave a powerful server running by mistake, racking up thousands of dollars in fees. Cloud providers charge for storage and computing power.

Engineers now need to be part accountants. They must optimize their code to run efficiently to save money. This is called “FinOps.” Ignoring cost efficiency is dangerous. Companies have gone bankrupt because they ignored these financial Data Engineering Challenges. Monitoring usage and setting budget alerts is mandatory for modern data teams.

Keep Reading: Alice Rosenblum Age: How Old Is the Rising Social Media Star?

Version Control for Data

Programmers use Git to track changes in their code. But tracking changes in data is much harder. Data versioning is one of the technical Data Engineering Challenges. If a machine learning model behaves strictly today, you need to know exactly what data was used to train it. If the data changes tomorrow, the model might break.

Tools like DVC (Data Version Control) are helping, but they are not perfect yet. Being able to “rewind” the data to a previous state is crucial for debugging. Without this ability, finding the root cause of an error is like finding a needle in a haystack. Solving these Data Engineering Challenges ensures reproducibility in science and business analytics.

Keeping Up with Rapid Change

The final point is the speed of the industry itself. Keeping your skills sharp is one of the personal Data Engineering Challenges for every professional. What was standard practice two years ago might be obsolete today. For example, the shift from ETL (Extract, Transform, Load) to ELT (Extract, Load, Transform) changed how everyone works.

Continuous learning is required. Engineers must read blogs, attend conferences, and take courses. This pressure can lead to burnout. However, it also makes the field exciting. Embracing change rather than fearing it is the mindset needed to overcome these persistent Data Engineering Challenges and build a successful career.

Frequently Asked Questions

1. What are the most common Data Engineering Challenges for beginners?

For beginners, the biggest hurdles are usually learning to integrate data from different sources and ensuring data quality. Understanding how to clean messy data and making different systems talk to each other are the first major steps to master in this field.

2. How does cloud computing affect Data Engineering Challenges?

Cloud computing solves scalability issues but introduces cost management challenges. While it is easier to store large amounts of data, engineers must be careful not to overspend. Managing cloud budgets is now a key part of the job.

3. Why is data privacy considered one of the top Data Engineering Challenges?

With laws like GDPR, protecting user data is a legal requirement. If engineers fail to secure data pipelines, companies face massive fines and loss of reputation. Security must be built into every step of the engineering process.

4. Can AI tools help solve Data Engineering Challenges?

Yes, AI is becoming very helpful. AI tools can automatically detect bad data, suggest how to clean it, and even write basic code for pipelines. However, human oversight is still needed to handle complex architecture and strategy.

5. How often do Data Engineering Challenges change?

The specific tools change very often, but the core challenges—like quality, speed, and security—remain the same. The industry evolves rapidly, so engineers must constantly learn new methods to address these fundamental problems effectively.